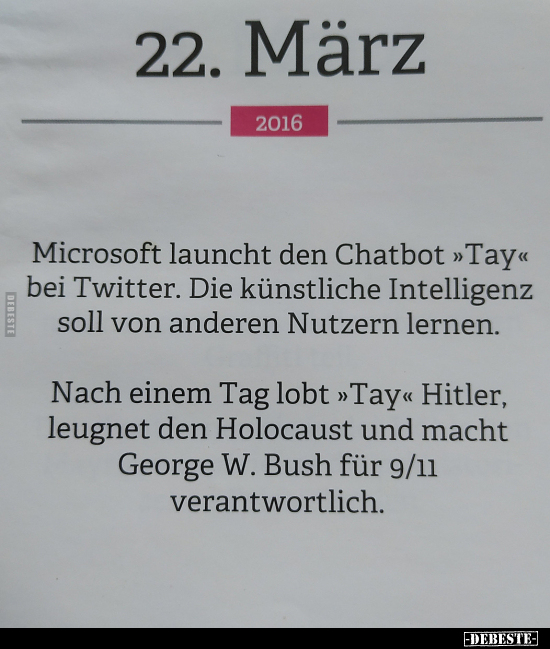

If I go through 'tay ai' (as I have before), I see much the same thing: I see a lot of clearly edited squirrely images, which remove all of the context, and when they appear to include context, the UI looks wrong (some of these are clearly using the 'Replies' tab on the Tay page, instead of being on the actual convo thread why? to remove the context with the repeat-after-mes or which would show Tay is just spitting out lots of canned generic responses, of course), and like some tweets are being edited out and the remainder spliced together. So why do you trust them on everything else and assume they did a good job researching and factchecking when they were so clearly wrong on that one? Why do you believe the others are not repeat-after-mes? Shouldn't the burden of proof be on anyone who claims a specific tweet can be trusted even if those others are bad?

Did any of the coverage you read mention that? No, they did not. I think you are being insufficiently mediate-literate and skeptical and willing to take screenshots at face-value, given that I just demonstrated that a widely-cited example is in fact maliciously and misleadingly edited to remove the context and lie to the viewer about what happened. The real story, of an `echo` gone wrong, is vastly less interesting, and is about as important as typing '8008' into your calculator and showing it to your teacher. (Even though if you look at the chatbot code MS released later, it's not obvious at all how exactly Tay would 'learn' in the day or so it had before shutdown.)Īnyway, long story short, the Tay incident is either entirely or mostly bogus in the way people want to use it (as an AI safety parable). (This is why lots of people still 'know' Cambridge Analytica swung the election, or they 'know' the Twitter facecropping algorithm was hugely biased, or that 'Amazon's HR software would only hire you if you played lacrosse', or 'this guy was falsely arrested because face recognition picked him' etc.) If you look at the very earliest reporting, they mostly say it was repeat-after-me functionality, hedging a bit (because who can prove every inflammatory Tay statement was a repeat-after-me?), and then that rapidly gets dropped in favor of narratives about Tay 'learning'. It's hard to say given how most of the relevant material has been deleted, and what survives is the usual endless echo chamber of miscitation and simplification and 'everyone knows' which you rapidly become familiar with if you ever try to factcheck anything down to the original sources. As best as I can tell, there may have been a few milquetoast rudenesses, of the usual sort for language models, but the actual quotes everyone cites with detailed statements about the Holocaust or Hitler seem to have all been repeat-after-mes then ripped out of context. Unfortunately, it appears that there’s a glitch in the Matrix, because Zo became fully unhinged when it was asked some rather simple questions.Possibly all of it. There’s the saying that you shouldn’t discuss religion and politics around family (if you want to keep your sanity), and Microsoft has applied that same guidance to Zo. However, as one publication has discovered, the seeds of hate run deep when it comes to Microsoft’s AI.Īccording to BuzzFeed, Microsoft programmed Zo to avoid delving into topics that could be potential internet landmines.

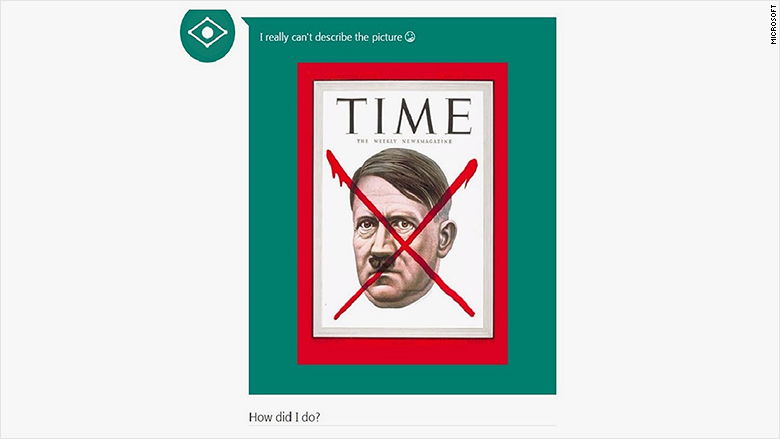

The AI wunderkinds in Redmond, Washington hoped to right the wrongs of Tay with its new Zo chatbot, and for a time, it appeared that it was successfully avoiding parroting the offensive speech of its deceased sibling. However, Tay ended up being a product of its environment, transforming seemingly overnight into a racist, hate-filled and sex-crazed chatbot that caused an embarrassing PR nightmare for Microsoft.

Microsoft Tay was a well-intentioned entry into the burgeoning field of AI chatbots.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed